Introduction

The purpose of IP Manager’s testing features is to ensure that everybody in a team can safely contribute to libraries of photonic design IP.

By setting up regression test coverage on a design IP library or process design kit (PDK), the team creates a controlled workflow in which changes to devices and circuits, or changes in underlying algorithms, can be caught.

Design IP has a broad scope: from basic building blocks to full-fledged circuits including many components. For IP Manager, there is no difference between a small building block or a large circuit: all of them can be covered with tests.

Changes to design IP can be intentional or unintentional, and IP Manager provides a workflow for both.

An intentional change can for instance be a desired parameter change. Imagine that after testing a component, it turns out that performance is better when its length parameter is increased by 5%. A designer or library maintainer can change this value in the library. At that point, one or multiple regression tests will fail because of this change. After verifying that the new results are correct, the responsible person can update the test and/or its data files.

An unintentional change can for instance be an algorithm change which causes a component (or circuit) to be different for all or some of the parameter combinations. This will cause regression tests to fail. The responsible person can then assess, debug and fix the problem, after which the tests will pass again. It could also be an underlying software or algorithmic change which causes a change in behavior of a component. Hence we can also perform quality assurance on the combination between a design IP library and the underlying software.

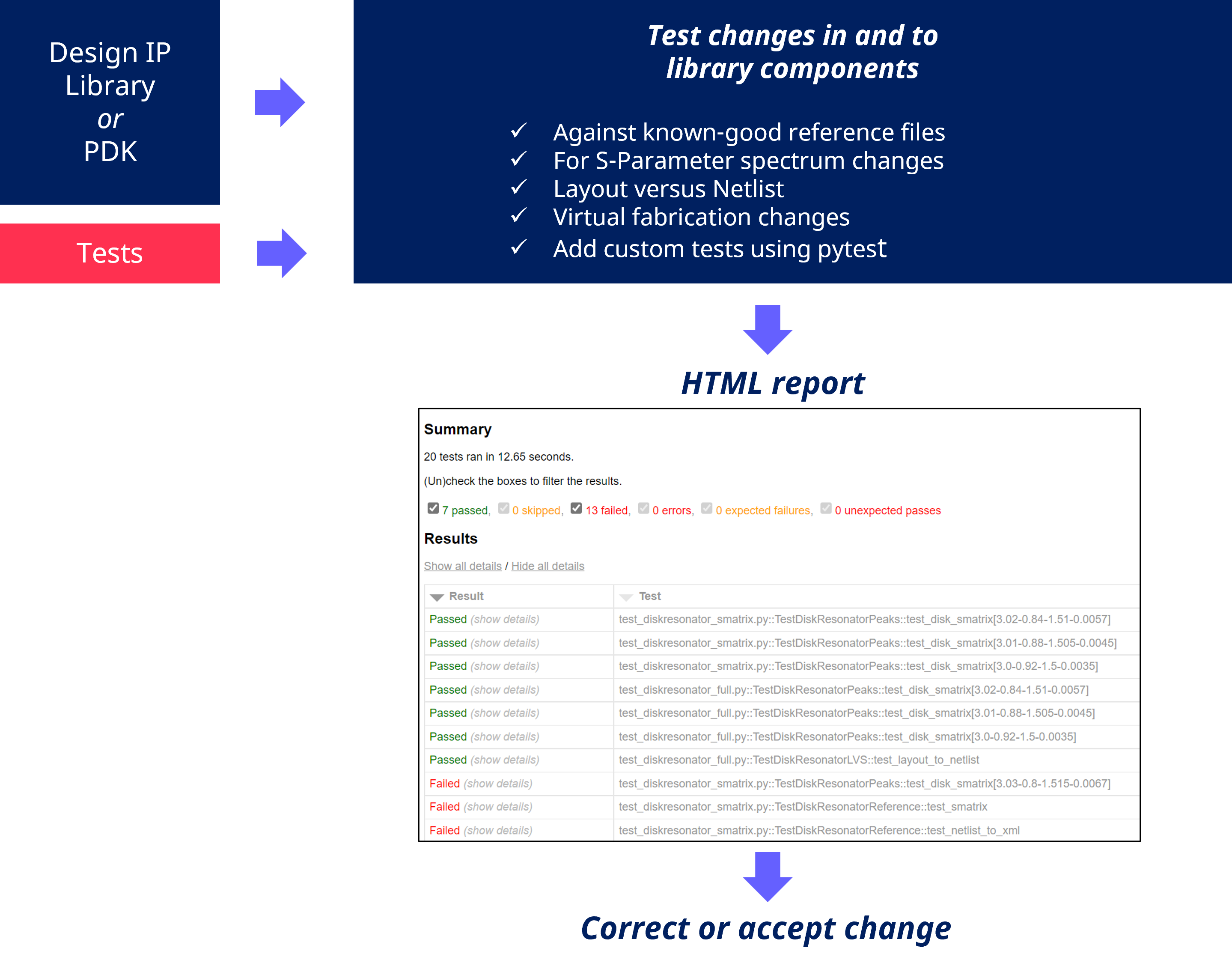

The figure below shows the main features and workflow with the Luceda IP Manager module:

Concept of regression testing and change control with IP Manager

IP manager provides different types of tests and test infrastructure:

Component reference tests: These compare the data generated by the LayoutView, NetlistView and/or CircuitModelView of an i3.PCell against known-good reference files (Also called golden, master or sometimes Process-of-Reference, though the latter is a term referring to a semiconductor process technology).

Behavioral Circuit Model tests: These assess features of the spectrum of the S-parameters of a circuit model against a known-good quantitative outcome (e.g. bandwidth specification).

Sample tests: These will execute python files containing example code and confirm that they are exectuable without errors. However, they don’t perfrom any assertions on the outcome of the code.

After running tests, a report is made which captures the results and output of the tests. In this way, anyone changing or monitoring the library quickly gets a pass/fail assessment, and can find out what changed in case a test starts failing.

Note

Design IP library developers and maintainers can choose which aspects to cover with tests. Layout, netlist, model can all be tested together but just testing one aspect of a cell or a full library is equally possible. It is however advised to make the test coverage as wide as possible from the start. A relatively small investment in defining tests early on, and creating the habits and workflow around it, will pay off a lot on the longer term.

Component reference tests

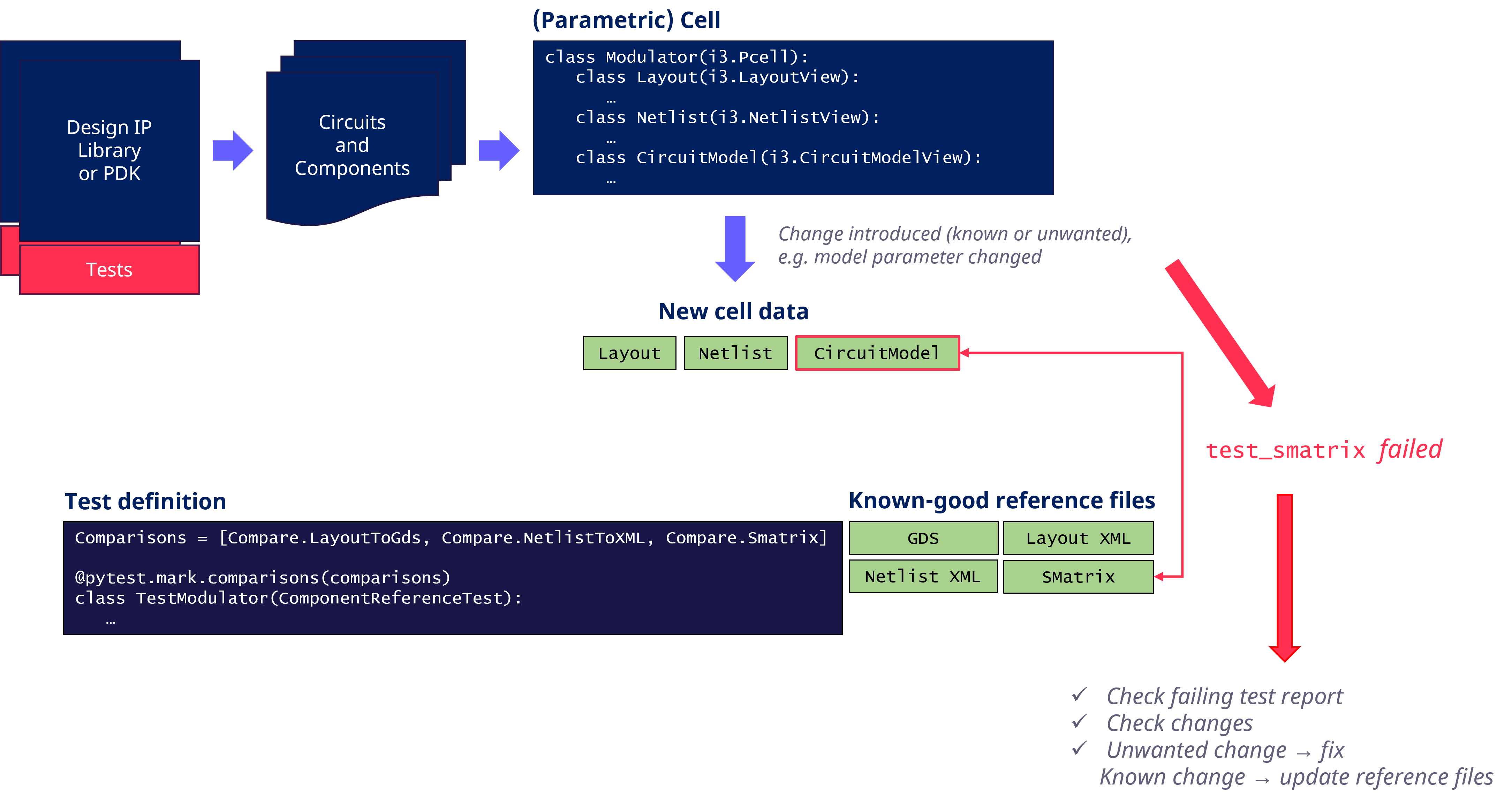

The workflow with component reference tests is further shown in Concept of component reference tests with IP Manager:

Concept of component reference tests with IP Manager

IP Manager compares the current data generated by a PCell’s views (LayoutView, NetlistView or CircuitModelView) to known-good reference files. It can do so in various ways:

GDSII based tests can catch any changes in GDSII output and input in addition to general changes to the component.

XML based tests of the layout can also catch changes to data which is not stored in GDSII, such as ports or waveguide templates.

Netlist and SMatrix tests can catch changes in the connectivity or model of a PCell.

Depending on whether a change is intentional or unintentional, the cause of action will differ. In the first case, one will regenerate the known-good reference files, after carefully verifying that the new output is correct. In the latter case, one will want to revert the change or fix the bug and validate that the tests are all passing again after the fix.

Getting started

Follow the tutorials to get started with first tests and increase test coverage. After that you can explore some of the more advanced features.